Get the latest insights, product updates, and news from Permission — shaping the future of user-owned data and AI innovation.

Shopping online has come a long way, and when it comes to saving money online, you’ve got plenty of options. Honey is one of the most popular coupon browser extensions on the internet, but it is far from the only one. There are tons of services you can try, each with their own spin.

To save you some time figuring out which one is best for you, we’ve compiled a list of 6 other money-saving apps like Honey, along with some ideas on how to make the most of these apps.

We’ll start with a reminder of what Honey does and then get into our favorite alternatives.

What Is Honey?

Honey is a plugin for all major browsers that helps users save money while online shopping by automatically searching and applying any relevant coupon codes when checking out.

When you have the extension installed, you receive notifications during the checkout phase on any eligible store. If you hit accept, Honey will automatically search their massive database of online coupons and apply the best one. They also have coupon codes and exclusive deals that can be used to earn “Honey Gold,” which are points you accrue over time and can be redeemed for gift cards.

To be clear: Honey is a great extension, but people have reported bugs and sometimes take issue with the data it collects (which is something probably all of these extensions do), so having other options means you can either use a combination and/or find one that is better suited to your needs.

How to Choose the Best Coupon Browser Extension

Once you dig into the world of cost-saving extensions and apps, one thing becomes clear: most of these apps function and behave very similarly.

With that in mind, the best choice is actually a combination. Because these extensions and apps cover different retailers and strike timed deals with stores, the best deals can change at any point. For example, some may be better for finding deals on clothing than on software or for getting more back-to-school deals in August, etc.

That being said, if you’re prioritizing cashback opportunities, it may be best to invest more in a single platform instead of spreading out your earnings across multiple services. Diversifying too much can increase the amount of work it takes to earn & may hold you back from crossing different redemption thresholds (some apps won’t let you cash out until you earn a certain amount).

And finally, if you’re using these extensions as an exercise in budgeting, remember that these apps exist to get you to buy more. It may seem like you’re saving cash, but if you just end up shopping more than you used to, then it’s a net negative. If you aren’t concerned with that and are comfortable building them into your existing shopping habits, then they can be fantastic.

1. Capital One Shopping (formerly WikiBuy)

Capital One Shopping, which used to be Wikibuy, is the most direct competitor to Honey. They provide almost the same experience, with automatically applied coupon codes, price alerts, and more.

How Capital One Shopping Works

Capital One Shopping helps you shop online, which in turn lets Capital One get more transaction fees and collect useful data about you. Anytime you’re looking at products online, the extension will search thousands of other retailers to see if there are any other places you could buy it for cheaper and/or if a coupon code is applicable.

You’ve got options with Capital One Shopping, too. You can download the browser extension and get a lot of value from it, but you can go even deeper by picking up the mobile app as well. This lets you get mobile alerts on shopping deals and check if there are deals on in-store items as well.

Pros of Capital One Shopping

- Free

- Easy-to-use browser extension

- Trending deals and featured offers

- Shopping credit for shopping like normal

- Price drop alerts on products you want

- Mobile app can check in-store items as well

- Automatically applies coupon codes during checkout like Honey

- Backed by an active and large company, which usually means faster bug fixes and more deals.

Cons of Capital One Shopping

- Mobile app user experience isn’t great but is improving.

- They collect a lot of private information about you.

Where to Download

Download Capital One Shopping here.

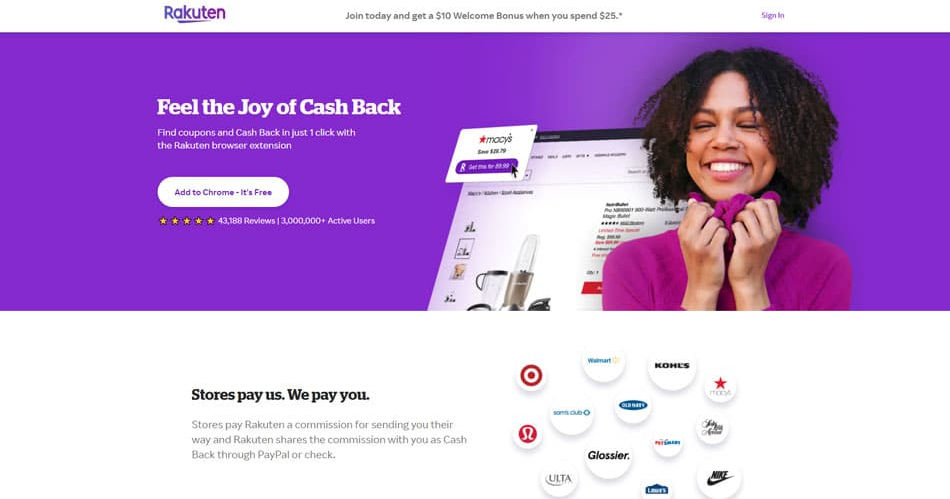

2. Rakuten

Rakuten is incredibly popular, and it’s no surprise why. This one-stop-shop service works with retailers to bring you exclusive cashback deals, negotiates coupon deals for its members, and has partnerships with thousands of retailers from Amazon to Target.

How it Rakuten Works

Rakuten gets a kickback for every purchasing user they send to retailers, and then they share that kickback with you. You become eligible for these cashbacks by clicking from Rakuten into whatever retailer you’re shopping from.

The typical user experience with Rakuten involves seeing an extension notification and clicking through it to earn from it OR getting in the habit of going directly to Rakuten’s site to see if there are deals on items you’re thinking about buying. For example, if you know you’re looking to grab a Nintendo Switch for your nephew this Christmas, you could go to Rakuten with that in mind to see if there are any relevant kickbacks.

Pros of Rakuten

- Free

- Earn anywhere from 1-40% cashback on almost anything purchased through Rakuten.

- Thousands of retailers

- Get paid out in cash

- In-store earning opportunities

- Easy browser extension

- Mobile app

Cons of Rakuten

- Quarterly payouts

- Sometimes the best products at retailers aren’t eligible for cashback.

- Have to get in the habit of going from their site or extension to a retailer.

Where to Download

Download Rakuten here.

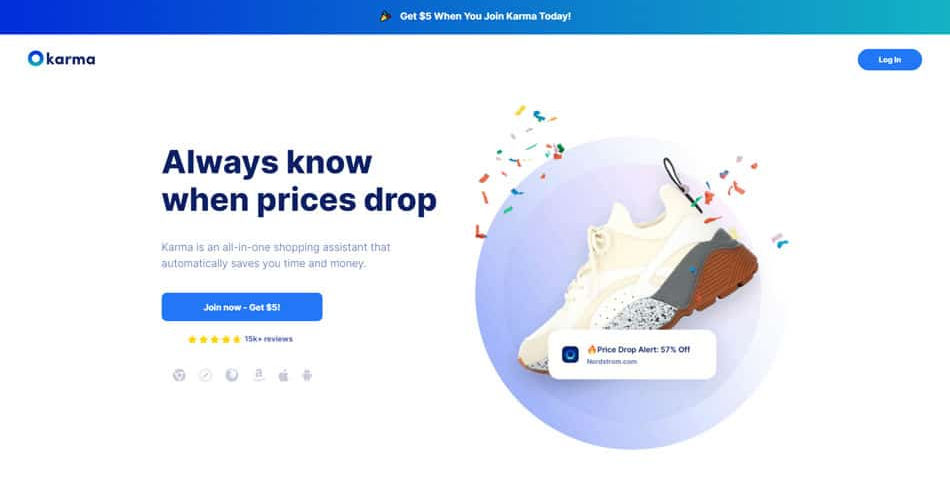

3. Karma (formerly Shoptagr)

Karma is another extension and app you can use to get price drop alerts, automatically apply coupons, make wishlists, and organize future purchases.

How Karma Works

The secret to Karma’s success is in their tech — their predictive analytics bot helps consumers make better shopping decisions prior to purchase, whether by opting for a better price, applying coupons, or letting you know to wait until the price drops even more[*].

The best way to use Karma is to set up what you know you’d like to buy in advance and then wait as price drop alerts roll in. For any of you out there who like to start shopping for actual Christmas after Christmas in July, then this is right up your alley. You can set up your wishlist, organize your future purchases by category, and wait for that ping. Then you’ll know it’s the best time to pick it up!

Pro Tip: combine these alerts with CamelCamelCamel’s Amazon price history (alternative #6 in this list) to see if the deal is as good as Karma is saying it is.

Pros of Karma

- Free

- Wishlist for out-of-stock items

- Price drop alerts

- Organize future purchases by category

- Coupons automatically applied

- Extension works on Chrome, Mozilla, and Safari

- Pays out in PayPal

- Mobile app

Cons of Karma

- Not as many stores as other options

- App and extension can be a bit buggy.

- Sometimes the best products at retailers aren’t eligible for cashback.

Where to Download

Download Karma here.

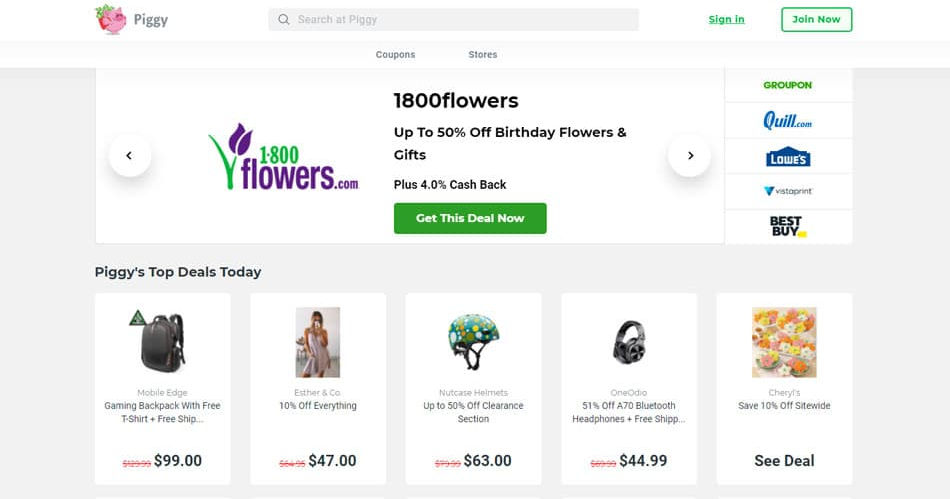

4. Piggy

Piggy focuses exclusively on cashback opportunities from partner retailers. While it doesn’t have the resources and depth some of the other options listed have, it can still be useful to have in your cashback mix.

How Piggy Works

By acting as a highway hub for shopping traffic, piggy can cash in on affiliate relationships and pass some of that cash back to you. All you have to do is download the extension and wait to get pinged as you shop. If you do, then you have the option of buying through Piggy and earning cash back.

Pros of Piggy

- Free

- Convenient browser extension

- Automatic coupons

- Get paid via check.

Cons of Piggy

- $25 cash out floor.

- Not as many retailers as other options.

- Not well designed.

Where to Download

Download Piggy here.

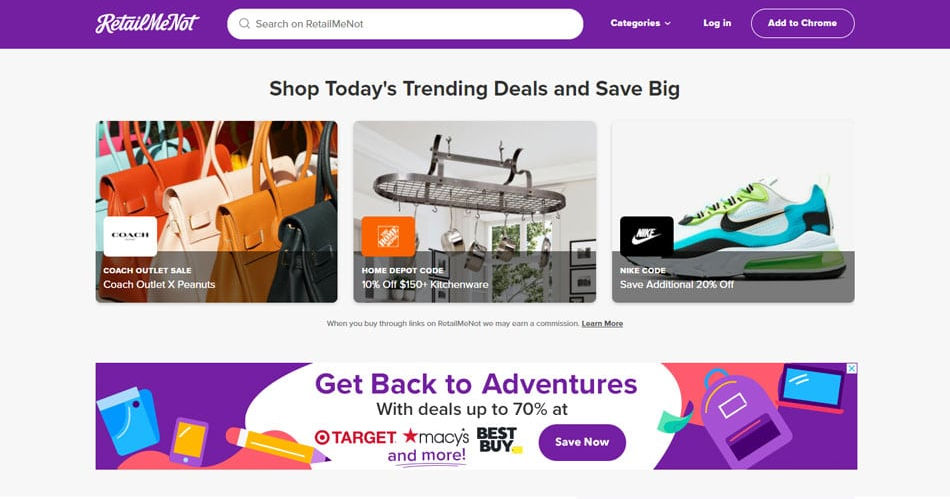

5. RetailMeNot

While RetailMeNot doesn’t have as many retailers as Capital One or Rakuten, they get their own exclusive deals and opportunities that are definitely worth keeping an eye on. They also release their own buying guides and offer advice on how to get the most out of your experience.

How RetailMeNot Works

Similar to Rakuten, RetailMeNot is best to use with a plan in mind. Apart from the extension, your best bet is to subscribe to RetailMeNot’s newsletter and check their website out periodically for any exclusive offers.

Once you see something that’s on your “dream” or “to-buy” list, you can snatch it up while the offer is good. Alternatively, you can just go to RetailMeNot to see if anything on sale catches your eye!

Pros of RetailMeNot

- Free

- Automatically applied coupons

- Exclusive deals

- Mobile app

Cons of RetailMeNot

- Only supported on Chrome

- Not as many retailers as some other options

- Site design isn’t as sharp

Where to Download

Download RetailMeNot here.

6. The Camelizer

The Camelizer is the browser extension for long-time Amazon price tracker CamelCamelCamel, and it’s a fantastic resource for seeing just how good that new “sale” is. For example, if you’re shopping a Summer sale in July, you can see if last year’s prices in the Fall were better than what you’re seeing now.

How The Camelizer Works

CamelCamelCamel is free for any user, and it’s best to use alongside the other options above. Consider CamelCamelCamel your coupon “hype manager.” In other words, it’s the voice of reason when an extension pings you with another AMAZING deal.

Because you can see just how good the deal is by comparing previous prices (assuming it was sold on Amazon), you can know if this is the sale to buy on or if you should wait for the next one. You can get all of that info by just clicking into the Camelizer on any Amazon listing. You can also set up specific price drop alerts on any items you know you want to buy as well.

Pros of The Camelizer

- Free

- Top price drops for best Amazon deals

- Awesome price history graphs

- Good for seeing how good a sale really is

- Works on Chrome, Firefox, Edge, Opera, and Safari

Cons of The Camelizer

- Design isn’t super friendly

- Is exclusive to Amazon

- No cashback or earning potential

Where to Download

Download The Camelizer here.

The Bottom Line on Apps Like Honey

Many of these apps function similarly, and each of them has different exclusive deals and events. So again, the ideal solution is actually a combination. Download a few of these extensions and compare them whenever you’re shopping to see what the lowest price is.

The best thing you can do when using these apps is get in the habit of checking these out before you actually order. In other words, once you know you’re going to buy something, take another 5 minutes and see if you can save some cash through these sites and apps! If you make this a buying habit, you’ll definitely save some dough.

%20(1).png)