Get the latest insights, product updates, and news from Permission — shaping the future of user-owned data and AI innovation.

It’s probably not the career path your mother would have chosen for you, but maybe it’s time for her to reevaluate.

Video games have become a titan in the entertainment industry over the last few decades, with the market peaking at $35.4 billion in 2019 revenue. For context, that’s more than three times the revenue made by the music industry and 83% of the money made by the movie industry in the same year[*].

You’re probably familiar with some of the ways you can make cash playing video games, with major streamers like Ninja making serious cash and eSports being aired on ESPN, but did you know there are ways you can take your favorite hobby and play video games for cash — even outside of a professional context?

While pursuing a professional career is an option, you don’t have to be a major league gamer to pad your savings account while you’re waiting for your next doctor’s appointment or when standing in line at the DMV.

There are so many ways to make money playing video games, but most of them aren’t worth it. We’ve done our research and pulled out the options that are worth considering. We tell you the honest truth — what you choose to do with it is your decision.

Some of these have the chance to be lucrative, most do not. Some follow more traditional career paths, others involve an entrepreneurial spirit. Some pay you immediately, others require content and time investment.

Take a close read through to figure out which option is best for you.

1. User Test Video Games for Large Video Game Developers

It’s not surprising once you stop and think about it, but every game ever released needs to be tested. Think of it like writing a book, except that the sentences aren’t linear. Someone has to playtest every aspect of a game before its release, and companies like Blizzard, EA, and Ubisoft all employ full-time and contracted video game testers.

The money can be better than you think. Video game testers can easily earn $50,000+ a year, but keep in mind this is a demanding, full-time position. You aren’t just lazily playing video games. Here’s an idea of what you would be doing:

- Carefully performing “matrix” tests to “break” a game – e.g. exploiting balancing strengths and weaknesses in a MOAB game.

- Writing down articulate and meticulous thoughts around new versions and the issues you encountered. You can’t say, “The game was choppy”. You’d have to say something more along the lines of, “Version 1.32’s load time between cutscenes clocked in at 12 seconds, which is much longer than the 4-second benchmark we established”.

- Attend meetings and relate your findings to the developer team.

- Staying on top of bug fixes and reminding the team to resolve them.

Another common complaint is the lack of upward mobility. Where do you go from being a user tester? Well, the answer is nowhere concrete.

How to Get Started

Check out some job boards and see if any positions are available.

Here are some companies that offer user-testing positions:

- Nintendo: How cool would this be? The only catch is you have to live in Redmond, Washington since it’s where their American headquarters is.

- Blizzard: User-testing jobs at Blizzard are hard to find, but you may be able to snag one if you keep a close eye on it.

- Rockstar: Same idea here. These jobs don’t come up often at Rockstar but you may be able to pick one up if you’re lucky. And remember these are legitimate jobs and should be treated seriously.

2. User Test Video Games via Smaller Third-Party Testing Sites

There are also generalized video game sites where you can sign up to test all sorts of games. Some of these require you to take voice and video recordings as you play them, others don’t. It’s essentially a product testing platform and the jobs depend on the various video game designers’ needs.

How to Get Started

Start enrolling and checking out gigs on a few user-testing sites.

Here are few third-party user testing sites to get you started:

- Beta Testing: Beta Testing was previously known as Erli Bird, and it’s a general platform for businesses to hire users to test their apps, games, and software.

- UserTesting: Similar to Beta Testing, this site has a broad set of user testing opportunities but video game gigs do show up from time to time.

- PlaytestCloud: This is a mobile app testing site, so you can find mobile games to test here. On their site, they mention that pay varies, but they give an example of a $9 test for 15 minutes of playtime.

3. Use Mistplay or Other Mobile Apps

There are a couple of mobile gaming apps that make their cash through ads and user data while paying users for their time. You’ll have to play what they want you to, but if you aren’t too picky then these apps could be good for you. You won’t make more than a few bucks a month, but it’s not a bad way to spend downtime.

How to Get Started

Download a few mobile gaming apps and see which one you enjoy the most.

Here are a few mobile gaming app options:

- Mistplay: Play games and earn cash. This is best for mobile gamers who don’t mind opting in to play random games. You won’t get more than a couple of bucks a month, though.

- Bananatic: Online platform where you play for points that you can redeem for gift cards and other offers.

- AppNana: A mobile reward app that gives you rewards for completing small tasks and playing games. You can then redeem them for Xbox gift cards and other prizes.

4. Use Sites Like Swagbucks or InboxDollars

If you aren’t picky about the video games you play and are interested in a generalized approach to spending downtime, then Swagbucks and InboxDollars are two of the “make money online” juggernauts to consider.

Both services are pretty similar. Basically, you create an account, fill out some basic information about yourself, and then there are a wide variety of ways you can collect “bucks” or “cash”. These options include taking surveys, watching ads, playing games, and much more.

You won’t make anywhere near a liveable income from these sites, but they are good options to have around if you’re bored and looking for ways to gamify your discretionary spending.

How to Get Started

Fill out an account on either Swagbucks or InboxDollars and start playing some games!

Here are few links to “make money online” sites:

- Swagbucks: The biggest player in the “make money online” space. You can do everything from play games to watch movie trailers to fill out surveys and turn earned points into cash.

- InboxDollars: This service is very similar to Swagbucks, and many people mix and match between the two. Take a look at the video game options on both sites and see which ones are more fun.

5. Make a Youtube Channel or Twitch Stream

This is the most popular way to make money with video games at the moment. Just like your last Los Angeles Uber driver, you could consider starting your own Youtube Channel or Twitch Stream.

Isn’t that extremely difficult? Well, yes. Of course. Being successful as an online video gamer and streamer requires at least one of these three of these skills, if not all:

- A particularly good knack for video games.

- A charismatic personality.

- A unique spin and/or special attention to production value.

Just opening up a streaming account and starting isn’t enough to get people to stick around. You need a hook. That can either be your ability to play, the way you have fun while you play, the way you present the material, or any of the above.

How to Get Started

Take some time to think critically about how to approach your Youtube or Twitch channel by using the advice below:

Take a close look at existing streamers. What could you do differently? How can you make video games entertaining outside of just playing them? What about you can become your unique value proposition? Think carefully before investing your time here, most people don’t make it.

Here are some major streaming sites to think about starting on:

- Twitch: The ultimate video gaming streaming site. The competition here is difficult, but you won’t find a platform with a wider potential audience.

- YouTube Gaming: YouTube’s live streaming is good but not as robust as Twitch’s, but it also allows you to post compilation/other types of video game content and ultimately build a broader channel than Twitch.

- Dlive: A newer blockchain streaming service that focuses on audience building and community rewards. This one is bound to be less saturated than the others.

6. Enter Tournaments

One skill-based opportunity is playing in tournaments. Large games like Overwatch, Apex Legends and Fortnite regularly host tournaments.

If you are aiming (wordplay intended), for a skill-based entry into the video game market, then you need to prove to others (and yourself), that you have what it takes to win. Start training and start competing. If you don’t see meaningful progress and aren’t seeing results after a period of pursuit, consider moving on.

My advice on this is two-fold:

- Choose a game with longevity to invest in. Considering how long StarCraft II has been around, there is a decent chance StarCraft II will fizzle out over the next few years. That means it’s probably not the best time to start skilling up.

- Choose a game that has existing hype or the potential for growth. Ideally, you will catch a wave like Ninja did with Fortnite.

How to Get Started

Find an upcoming tournament with players you know are around your level, start practicing, and get to work.

Here are a few video game tournament sites:

- Battlefy: BattleFy hosts a bunch of Rocket League, League of Legends, and Hearthstone tournaments, but it also has a lot of depth with the types of tournaments you can enter in. The competition is tough but the prizes are high!

- Gfinity Esports: Gfinity tournaments are extremely competitive and run by a well-respected company within the gaming world.

- WorldGaming: WorldGaming is a huge network of tournaments that has a variety of smaller and big tournaments you can compete in. If you want to get your feet wet, this is a good choice.

7. Consider Becoming a Video Game Coach

If you have some sort of professional record in the video games space, have an entrepreneurial spirit, don’t mind selling yourself, and are a good teacher, then you could look into becoming a video game coach.

The niche is small but growing, and if you can position yourself around certain video games and also build in essential networking and life-skills training apart from the pure “wins” results, then you may have a business opportunity on your hands.

How to Get Started

Take a hard look at your credentials and see if this path makes sense and if you want to give it a shot. Then, start thinking like an entrepreneur using the advice below.

Your best bet from a marketing perspective is either wealthy college kids or upper-income parents with kids interested in pursuing this full-time. Your approach will need to change according to the demographic you’re pursuing, and always remember whose pocketbook will be paying you.

I’d also pick up some Business 101 books to make sure you understand exactly what you’re getting into.

Here are a few sites you can use to start finding clients:

- Gamer Sensei: You have to apply to be a Sensei and give a bit of your cut to this site, but they will provide you with students if you get accepted.

- Fiverr: Fiverr is a general freelancing site that you can bid for contracts on. Set up your profile and start bidding for jobs!

- Facebook Gaming Groups: After you’ve set up your online site and portfolio via LinkedIn etc., you could join gaming groups, get involved in those communities, and then start making connections with aspiring players.

8. Write Video Game Articles or Reviews

This is more of a long play to build an audience, but if you love writing and reviewing games, then you should consider publicizing and monetizing your work. You can do this via two main ways:

- By pitching to existing gaming publications.

- By building up your own channel/brand specifically built around video game reviews.

Each option comes with its own challenges. If you are pitching to publications, you need to send ideas, articles, etc. that are an exact fit for their brand. Remember, people are lazy — the key to networking is to reduce the friction required for anyone to do what you want them to do. If you can pitch an article at the right time that’s perfectly on brand, then you may open some doors and get paid for your article.

To get an idea of how to cold-email journalism sites and land gigs, check out Toby Howell’s incredible cold email sequence, which he used to land a job at one of the most prominent email newsletters out today, Morning Brew. It’s brilliant and well worth the read if you plan on pitching articles to anyone anytime soon.

If you’re looking to build your own channel or audience, whether it’s directly or adjacently related to video games, then there are a few ways to think about it. You either need to:

- Approach video game reviews in a style that feels “fresh” or new. This can involve a certain ploy or brand feature (e.g., always including a video game developer on your reviews) or by having your own video production style.

- Use an approach that’s popular but do it better. This is very difficult to do and requires a lot of expertise or time developing that expertise.

In reality, it’s very difficult to make a significant income writing video game reviews and articles, so your best bet is to look at it as a passionate side hustle.

How to Get Started

Start writing and reviewing video games publicly and either use them to pitch to existing publications or post them directly on your own platform.

Here are a few publications to pitch to and reviewers to emulate:

- IGN: IGN is a powerhouse player in video games journalism. Remember to make your pitch as specific and actionable as possible. Good luck!

- Polygon: Polygon is an entertainment review and news company that is always up to hear good pitches from freelancers. Use this link to review their submission guidelines.

- Mandalore Gaming: Mandalore Gaming is a great example of a one-person shop for video game reviews. Check out how well they review games and conduct audience building.

- Gameranx: Gameranx is an even bigger Youtube operation than Mandalore Gaming, and they specialize in compilation and “tips and tricks” videos. Take a look through their top videos to get some inspiration for your own channel.

Bonus: Expand Your Definition of “Getting Paid to Play Video Games”

What about avenues that don’t directly involve playing video games? As we mentioned, the video games industry is massive, so if you enjoy video games, I encourage you to look beyond strictly playing video games for money.

You could:

- Become a video game story writer

- Edit scripts for video game dialogue

- Conduct research for a team

- Market video games

- Become a video game developer

There are tons of options once you open this angle up. Yes, this doesn’t involve pwning noobs for $100k/year, but on the other hand, the world is your… kirby?

Plus, working alongside your passion instead of in it is often a good way to preserve your joy and love for it. It’s easy to get burned out on something you love once it’s your actual job — just ask any musician after a long touring leg!

The Bottom Line on Getting Paid to Play Video Games

If you’re looking to make a few extra bucks in your downtime, then there are definitely options for you to make money playing video games. Check out sites like Swagbucks and Mistplay and use them to pick up some extra cash in time you’d normally be wasting anyway.

Heck, you could even use the money you earn from those to buy major releases you’re really looking forward to playing.

And if you’re looking to make it big in video games, that’s fine to pursue — just make sure you’re strategic about it and recognize that most people aren’t able to make a living playing video games. Fortunately, there are all sorts of fun and interesting ways to build video games into your life

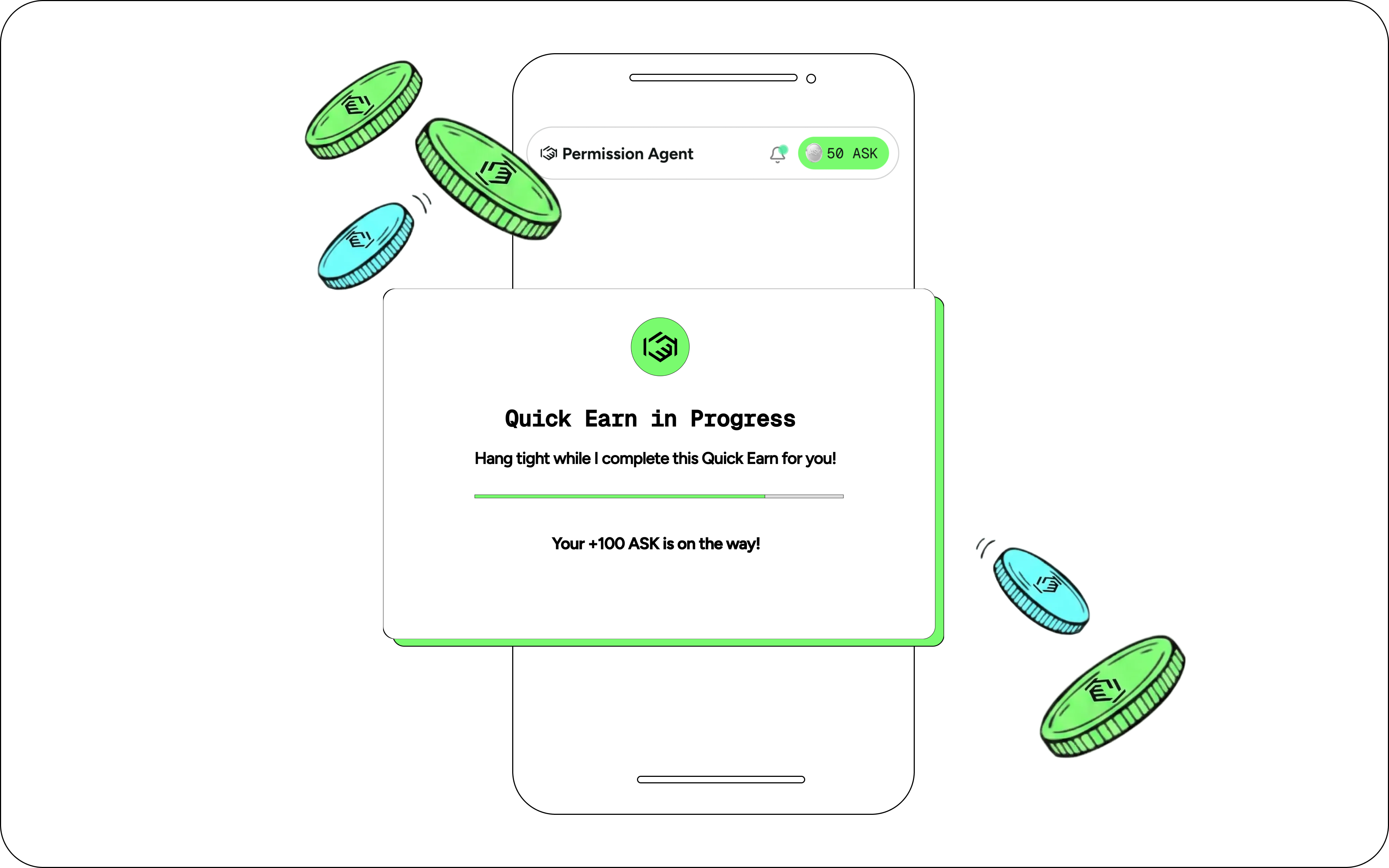

Permission is changing the internet as we know it by paying users for sharing their data while browsing the web instead of allowing advertising companies to use it for free. Check out their Browser Extension which lets you passively earn crypto as you use the internet.

See how we’re giving the power of data ownership back to the people.

%20(1).png)